It's Time for LLM Connection Strings

Simplify Model & Provider Config with llm:// URLs

Update: This article led to a draft IETF RFC for the

llm://URI scheme.

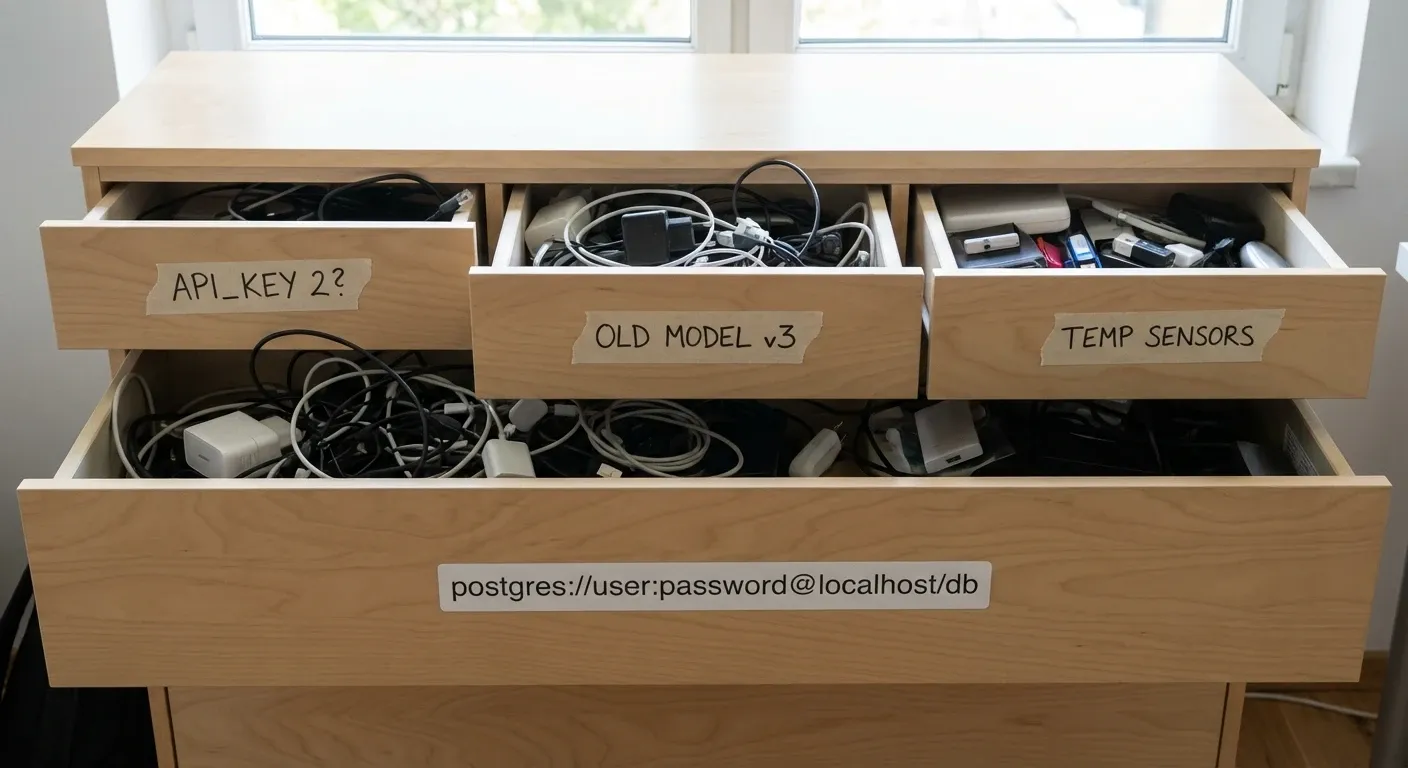

Remember the bad old days when connecting to a database meant juggling a miscellaneous grab-bag of environment variables?

It was a tower of delicate config. DB_HOST, DB_PORT, DB_USER, DB_PASSWORD, DB_NAME… or wait, was it DB_USERNAME? Is it DB_PASS or DB_PWD? Do I need the PG_* prefixes this time? And where the hell does the timeout setting go?

It was a fragile house of cards, ready to topple your production build because you forgot to capitalize HOST.

Then, someone had the brilliant idea to just use a URL¹:

postgres://user:pass@host:5432/dbnameOne string. Everything you need. Universally parseable. Portable. Dare I say… beautiful?

So why are we treating LLMs like it’s 1999?

The Env Var Explosion

Right now, my .env file looks like a graveyard of abandoned API keys. OPENAI_API_KEY, ANTHROPIC_API_KEY, MISTRAL_API_KEY, GROQ_API_KEY. And don’t get me started on Azure—you need an endpoint, a deployment name, an API version, and a key just to say “hello”.

It’s not just ugly; it’s friction. Every time I want to swap a model or test a new provider, I’m rewriting initialization code, hunting down documentation for specific parameter names, and adding three more lines to my environment config.

What if we just… stole borrowed the DB URL idea?

Introducing LLM Connection Strings

Imagine configuring your entire model interface with a single line:

llm://api.openai.com/gpt-5.2?temp=0.7&max_tokens=1500llm://api.z.ai/glm-4.7?top_p=0.9&cache=trueAnatomy of an LLM Connection String

The scheme is llm://. The host is the provider’s API base URL. The path is the model name. And query parameters handle all the runtime options that usually clutter your code.

Need auth? Great, add it.

Just like postgres://, we can bake authentication right in:

llm://app-name:sk-proj-123456@api.openai.com/gpt-5.2?temp=0.7Note: Yes, putting credentials in URLs can be a security risk if you’re pasting them into public logs. But modern logging services are pretty good at scrubbing these patterns, and honestly, are you treating your .env file much better? Verify, sanitize, and use with caution.

Resiliency? Why the hell not.

Many database libraries support round-robin failover by specifying multiple hosts. Why shouldn’t our AI agents have the same reliability?

llms://primary.gpt,backup.gpt/gpt-6?temp=0.9That s in llms:// isn’t a typo—it’s a hint that we’re dealing with multiple hosts. If primary.gpt hangs, the client automatically retries backup.gpt. No complex router logic required.

One string with everything from your auth to your endpoint to your hyperparameters.

Alternative Formats

I’m not married to llm://. The specific scheme matters less than the standard itself.

I could imagine a world where we use provider-specific schemes for brevity, while keeping the standard structure:

ollama://localhost:11434/llama3vercel://anthropic/sonnet-4.5?temp=0.8&web_search={"maxUses":3}bedrock://us-west-2.aws/anthropic/sonnet-4.5?temp=0.8&cacheControl=ephemeralRegardless of the exact syntax, the core benefits are undeniable:

- Portability: Copy & paste your entire config from a local script to a cloud worker.

- CLI Friendly: Pass a single argument to your scripts.

my-agent --model "llm://..."beatsmy-agent --model gpt-4 --temp 0.7 --key $KEY --host .... - Language Agnostic: Every programming language has a robust URL parser. We get validation, parsing, and sanitization for free.

The database world took decades to figure this out.

Good news, in AI timelines, that’s only about half a vibe-year ago.

The Verdict

We don’t need another complex configuration standard or a new YAML-based manifest file. We just need to use the one tool that’s been working for the rest of the internet for the last 30 years.

Let’s stop reinventing the wheel and start treating our LLM connections with the same respect we give our databases. Your .env file (and your sanity) will thank you.