The demo shipped. Then the real questions started: why does this cost so much, why did it say that, and what happens when it runs unsupervised?

AI Consulting

The demo worked great. Production has opinions.

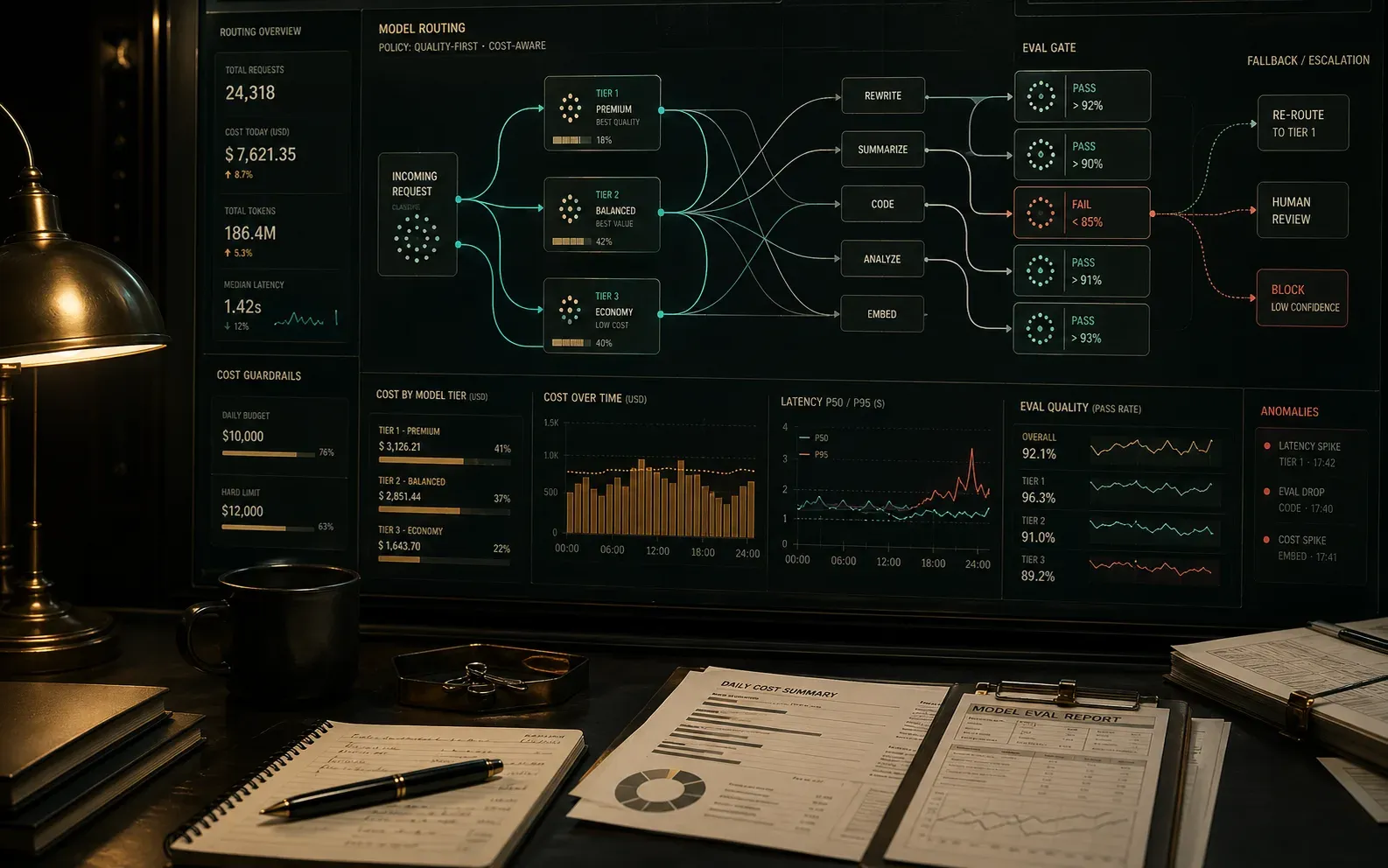

I help teams move from impressive demos to agentic systems that hold up — with clear routing, measurable quality, useful traces, and eval gates. That can mean redesigning model routing, adding token observability, building eval suites, or turning a fragile prototype into a production system your team can actually reason about.

Best fit

AI Consulting and Cost Optimization

- Agentic architecture review across prompts, traces, tools, models, and data flow

- Test and eval plan for quality, regressions, autonomy boundaries, and cost

- Model routing, caching, batching, and prompt strategy recommendations

- Implementation support for the highest-leverage fixes

Common token cost reduction target

Quality checks before agent autonomy

Spend and quality tied to features

Why teams call

The pattern I keep seeing.

Once usage grows, the 'we'll fix it later' prompt and routing decisions start shaping product behavior in ways nobody planned for.

Teams need practical architecture judgment that balances quality, latency, safety, and cost — without treating evals as something you do after the incident.

What changes

What actually gets better.

Identify the features, prompts, routes, and user patterns driving the majority of AI spend.

Design model-routing, tool-use, and caching strategies that reserve heavier work for tasks that truly need it.

Add token, trace, and eval observability so product, engineering, and finance can reason from the same facts.

Turn architecture improvements into measurable changes your team can keep operating after the engagement.

How I work

No mystery, no handoff decks.

Make behavior explainable

We connect traces, prompts, tool calls, invoices, endpoints, and product behavior so quality and cost have visible causes.

Tune the system, not just the prompt

I look at routing, context design, tools, caching, retrieval, retries, evals, and fallback behavior together because quality emerges from the system.

Ship measurable changes

The goal is not a slide deck of suggestions. It is a set of improvements your team can deploy, evaluate, and keep improving.

Next step

Ready to stop circling it?

Bring whatever your team keeps putting off — the scary migration, the expensive AI bill, the app that misbehaves in production. We'll figure out what's actually blocking it.