Adversarial users don't show up during happy-path testing. They show up the week after you launch, with time on their hands.

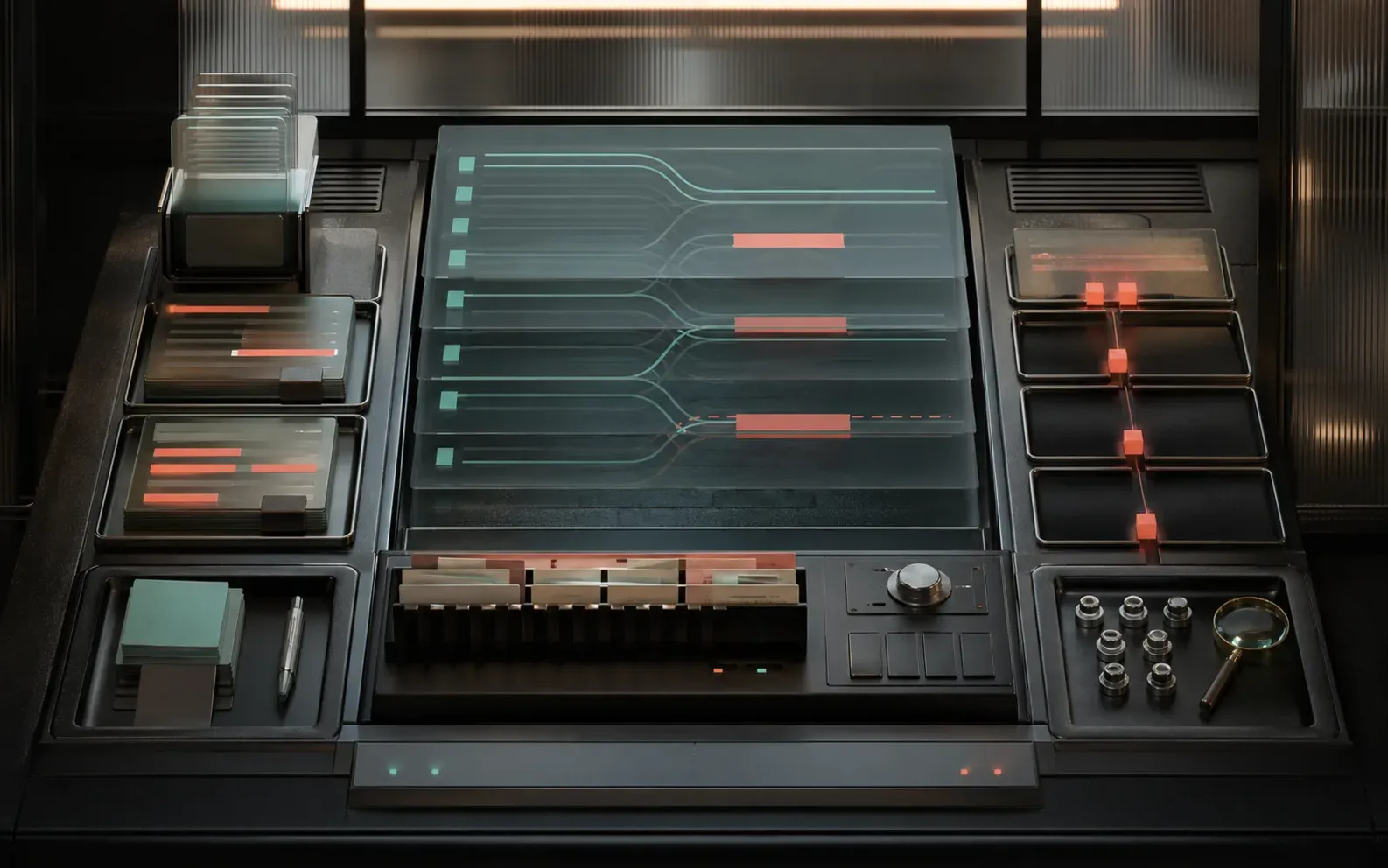

AI Guardrails

Your users are creative. More creative than your happy-path tests.

I help teams add moderation, prompt defenses, policy enforcement, evals, and human-review escape hatches that fit the product they actually have today. The goal is not security theater. It's practical guardrails that let agentic features keep moving — with evidence that they're not going sideways.

Best fit

AI Guardrails Consulting

- Guardrail architecture and threat model for your current product

- Recommended moderation and eval stack with cost and latency tradeoffs

- Policy matrix for unsafe content, prompt attacks, PII, and tool misuse

- Implementation roadmap your team can ship in phases

Retrofit into existing AI flows

Moderation cost through layered checks

Policies engineers can implement

Why teams call

The pattern I keep seeing.

Teams often overspend on blanket moderation calls when a layered policy engine would catch most issues for far less.

A safe demo is not the same thing as a production-safe agentic workflow with logging, evals, escalation, and recovery paths.

What changes

What actually gets better.

Map every risky AI interaction across input, retrieval, tools, output, and logging.

Design layered moderation so cheap deterministic checks absorb routine policy issues before expensive model reviews fire.

Add trust controls like rate limiting, review queues, redaction, quarantine, eval gates, and escalation paths.

Document guardrail coverage so product, engineering, and leadership all understand residual risk.

How I work

No mystery, no handoff decks.

Inspect the real traffic

We start with the prompts, retrieval payloads, tool calls, and failure modes your app already sees instead of designing for imaginary users.

Layer the controls

I combine cheap static rules, contextual classifiers, and targeted model-based moderation only where they add real value.

Build for operations

The final design includes observability, human review, safe fallbacks, and incident response so the controls stay useful after launch.

Next step

Ready to stop circling it?

Bring whatever your team keeps putting off — the scary migration, the expensive AI bill, the app that misbehaves in production. We'll figure out what's actually blocking it.